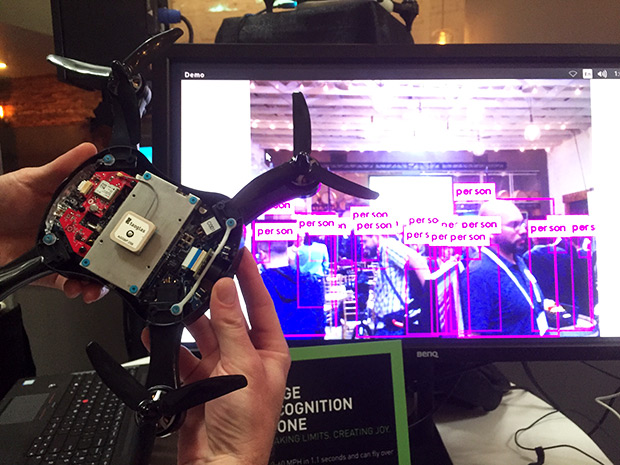

Nvidia wants AI to Get Out of the Cloud and Into a Camera, Drone, or Other Gadget Near You

By

People are just now getting comfortable with the idea that data from many electronic gadgets they use flies up to the cloud. But going forward, much of that data will stick closer to Earth, processed in hardware that lives at the so-called edge—for example, inside security cameras or drones.

That’s why Nvidia, the processor company whose graphics processing units (GPUs) are powering much of the boom in deep learning, is now focused on the edge. Deepu Talla, vice president and general manager of the company’s Tegra business unit, says bringing AI technology to the edge will make a new class of intelligent machines possible. “These devices will enable intelligent video analytics that keep our cities smarter and safer, new kinds of robots that optimize manufacturing, and new collaboration that makes long-distance work more efficient,” he said in a statement.

Why the move to the edge? At a press event held Tuesday in San Francisco, Talla gave four main reasons: bandwidth, latency, privacy, and availability. Bandwidth is becoming an issue for cloud processing, he indicated, particularly for video, because cameras in video applications such as public safety are moving to 4K resolution and increasing in numbers. “By 2020, there will be 1 billion cameras in the world doing public safety and streaming data,” he said. “There’s not enough upstream bandwidth available to send all this to the cloud.” So, processing at the edge will be an absolute necessity.[Read More]